Dear Sentinels

This week we are back to AI, and it is a doozy. First, we return to TOON, or the Token-Oriented Object Notation. It is an object notation, as the name would suggest, that actually saves you money by reducing Large Language Model (LLM) costs! Then, in the research article, we are returning to the original ChatGPT3 workflow. They are actually referencing training their LLM on Wikipedia multiple times, while also highlighting the all-too-scary manipulation we now face.

Also, I am on vacation over the next week, but not to worry, as my next article is in the works already and will be done before we go.

Want to get the most out of ChatGPT?

ChatGPT is a superpower if you know how to use it correctly.

Discover how HubSpot's guide to AI can elevate both your productivity and creativity to get more things done.

Learn to automate tasks, enhance decision-making, and foster innovation with the power of AI.

News from around the web

If you're building applications with Large Language Models (LLMs), you know that every API call has a cost tied directly to token count. We often send this data using familiar formats like JSON, but this isn't how an LLM actually processes information. A new data notation called TOON (Token Object Oriented Notation) offers a token-native solution, and understanding it reveals some surprising truths about building more efficient AI.

Every API call you make, every performance metric you track, and every dollar you spend revolves around the number of input and output tokens processed. This creates a fundamental mismatch when we use verbose, punctuation-heavy formats. Shifting your perspective to a "token-first" approach is the first step toward building applications that are not just functional, but also highly efficient and cost-effective. Across the benchmarked test datasets, TOON used 49.1% fewer tokens than standard JSON and 56.0% fewer tokens than XML to represent the same information. It achieves this through a combination of minimal syntax, removing redundant punctuation like braces, brackets, and most quotes, and a tabular structure that declares keys only once for an entire dataset.

The format uses explicit lengths and fields when defining a data array. These features act as built-in instructions for the LLM, helping it validate the data it receives and understand the expected structure before it even begins processing the content. This makes TOON not just a more compact format, but a more predictable one, reducing the risk of the LLM hallucinating. This nuance is critical for developers choosing the right tool for their stack. For example, in retrieval accuracy benchmarks, TOON achieved the highest accuracy on gpt-5-nano (96.1%) and grok-4-fast (49.4%). However, it was slightly outperformed by standard JSON on claude-haiku and by CSV and XML on gemini-2.5-flash. The crucial takeaway is the overall trade-off. Across all models, TOON achieves 70.1% accuracy (vs JSON's 65.4%) while using 46.3% fewer tokens.

Its ideal use case, or "sweet spot," is for uniform arrays of objects, think tabular data where multiple rows share the same structure. Think of passing a list of 10,000 user profiles or product SKUs. It's essential to view TOON as a high-performance tool for the right job, perfectly suited for optimising large, structured datasets being sent to an LLM. For the right kind of data, it can significantly cut costs and reduce prompt complexity.

This raises a critical question for developers: what other parts of our LLM stack are we taking for granted?

Summary

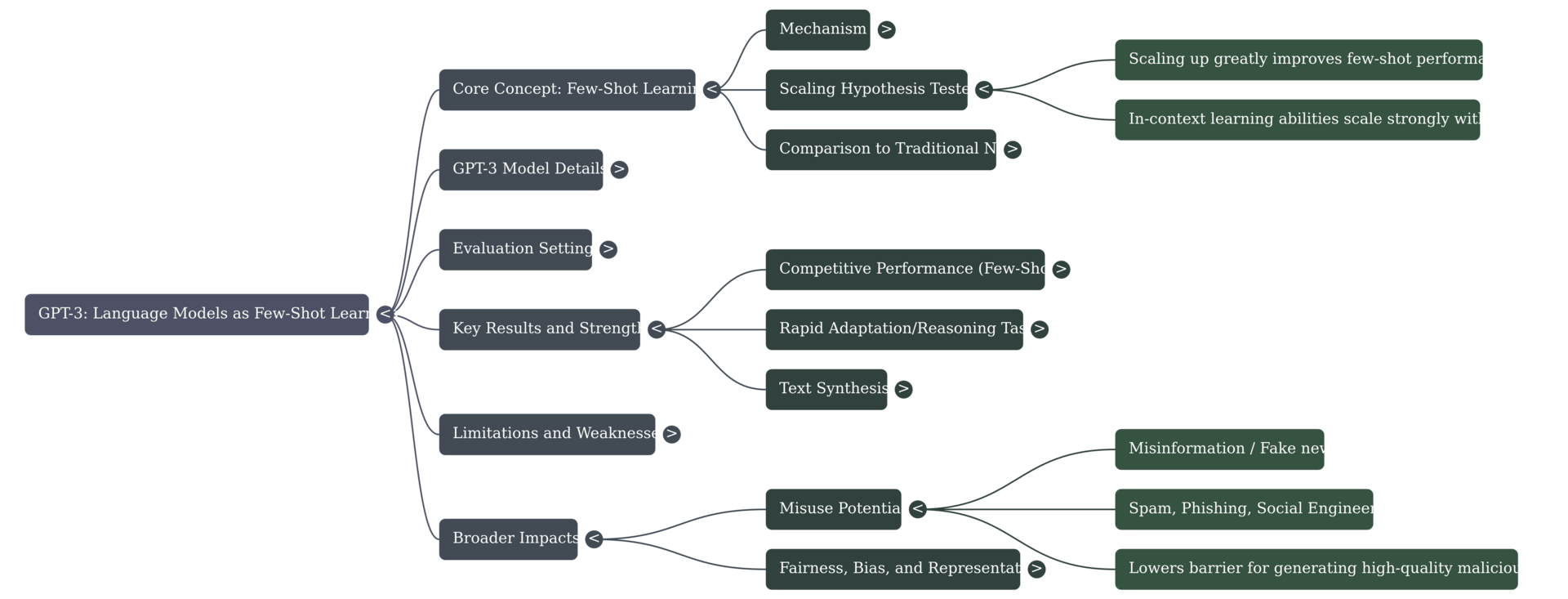

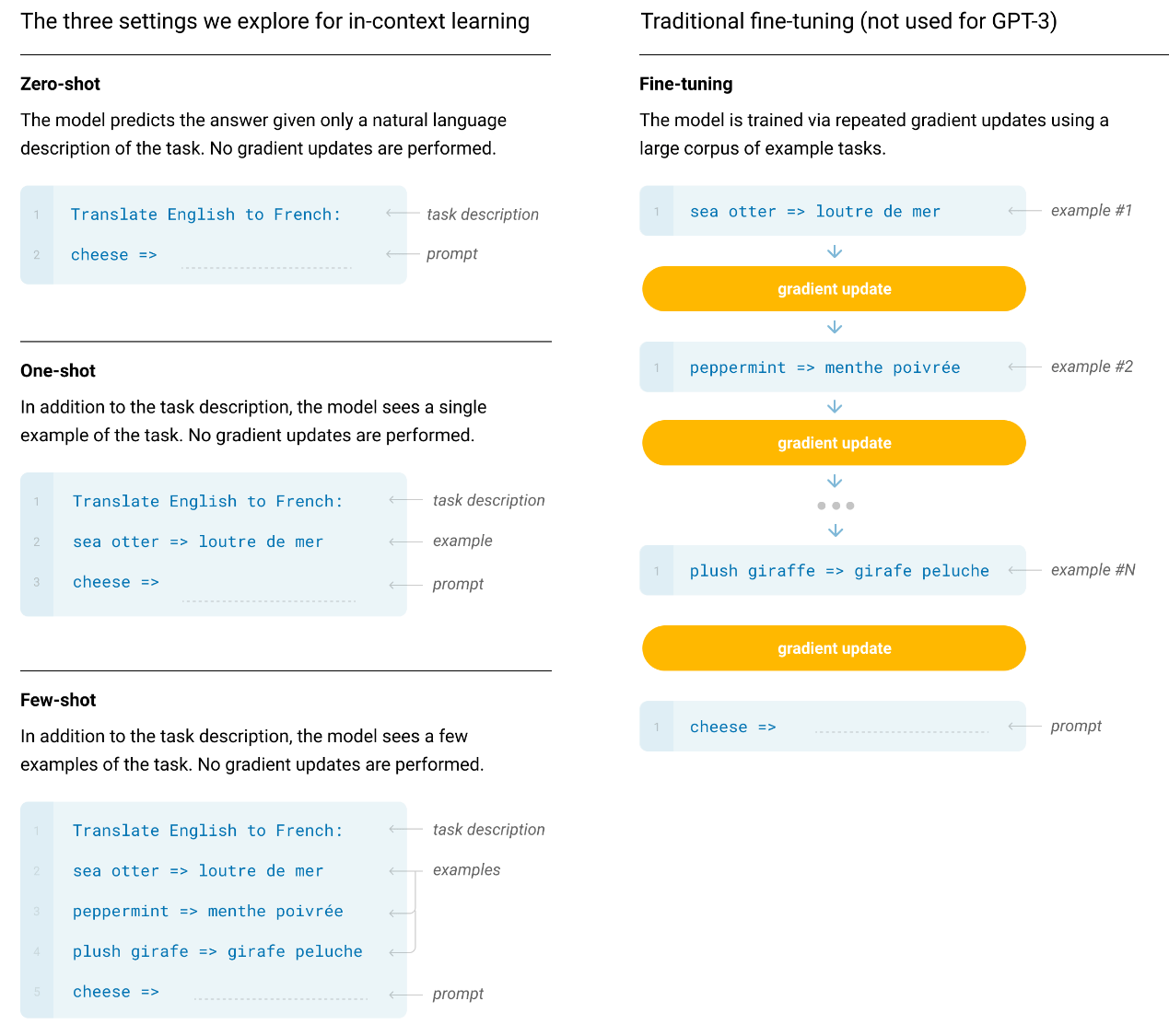

Scaling up language models, notably the 175 billion parameter GPT-3, significantly improves task-agnostic, few-shot performance, often achieving results competitive with prior state-of-the-art fine-tuning methods. GPT-3 performs tasks through pure text interaction without gradient updates and shows proficiency across diverse Natural Language Processing (NLP) tasks, including translation, question answering, and complex on-the-fly reasoning.

Background

Prior NLP systems achieved progress through large pre-training followed by task-specific fine-tuning, which still required vast task-specific datasets, limiting applicability. This conventional paradigm risked models exploiting spurious correlations in narrow datasets and often failed to generalise well. Humans generally perform new language tasks from simple instructions or a few examples, a level of fluidity that current NLP systems largely struggle to match. The present work argues that scaling model capacity greatly improves the model's ability to use its pre-training skills to rapidly adapt during inference time through "in-context learning".

Use-case

GPT-3 demonstrates strong utility across many domains, achieving competitive or state-of-the-art performance in tasks such as closed-book question-answering and translation. It exhibits one-shot and few-shot capability for synthetic tasks requiring rapid adaptation, including performing multi-digit arithmetic, unscrambling words, and using novel words in sentences. Furthermore, when generating synthetic news articles, GPT-3's outputs were difficult for human evaluators to distinguish from human-written articles, showcasing its high-quality text generation. Broadly, beneficial societal applications include grammar assistance, code/writing auto-completion, and enhancing search engine responses.

Future Work

Despite its strengths, GPT-3 struggles significantly with certain comparison tasks like determining if one sentence implies another, or comparing word meanings. A potential algorithmic improvement involves creating a large bidirectional model to combine GPT-3's scale with bidirectionality, which may resolve weaknesses in comparison tasks or reading comprehension. While this paper focuses on in-context learning, evaluating GPT-3 in the traditional fine-tuning setting remains an important direction for future work. Methodologically, future training must incorporate more aggressive data contamination removal to resolve the bugs discovered in the initial filtering process.

You can download the article here.