Dear Sentinels

Hi there, and welcome to the second weekly newsletter! I am continuing to experiment with the format. So first of all, we have a list of useful stories I have seen over the last couple of weeks, then the article, and then our research review, which in this case is the famous research article "Attention is all you need".

Last but not least, I was sitting in the office typing up this newsletter post when a student who has an internship at our department walked up and introduced himself. We had a long conversation, and by the end of it, I had a new subscriber! Also, he gave me some pointers on the article that I wrote below.

The AI Insights Every Decision Maker Needs

You control budgets, manage pipelines, and make decisions, but you still have trouble keeping up with everything going on in AI. If that sounds like you, don’t worry, you’re not alone – and The Deep View is here to help.

This free, 5-minute-long daily newsletter covers everything you need to know about AI. The biggest developments, the most pressing issues, and how companies from Google and Meta to the hottest startups are using it to reshape their businesses… it’s all broken down for you each and every morning into easy-to-digest snippets.

If you want to up your AI knowledge and stay on the forefront of the industry, you can subscribe to The Deep View right here (it’s free!).

News from around the web

Signs of the Internet's collapse, or we as netizens are fighting back:

Wikipedia and its AI Problem

Wikipedia has long been a foundation of the Internet, a trusted resource that provides the factual backbone for countless articles, school projects, and late-night curiosity dives (okay, I know the actual factual nature of it in certain cases is not that strong, but give me a break, mmmkay). This is why a recent announcement from the Wikimedia Foundation landed like a ordures fart with bits in it. After updating its systems to filter out sophisticated bot traffic, the organisation revealed that Wikipedia is bleeding human visitors. The primary culprit is a fundamental shift in how we find information. The very AI technology that trains on Wikipedia's vast library of knowledge is actually killing it. Compounded by a move toward social media for search, this trend is creating a paradox that threatens the entire open web.

AI-powered search summaries are answering user questions directly within the search results page, and yes, although there are references provided by the AI search bots, the human running those searches will invariably not click on them, unless they want more details than what AI search has given them. While the content is still being used, the creators and platforms that produce it are becoming invisible, and the traffic they rely on is vanishing.

The data reveals a stark picture of this new reality:

Wikipedia's human page-views fell by approximately 8% in 2024 after it began filtering out sophisticated bot traffic.

When Google displays an AI Overview, user clicks to websites fall from 15% to just 8%, a drop of nearly 50%.

Google now uses AI summaries for 60% of questions that start with who, what, when, or why.

If the people and platforms that create the web's knowledge no longer receive traffic, who's going to keep creating and updating it? This disappearing traffic isn't just a data point; it's an existential threat!

The Web is Staging a Revolt

Publishers and platforms are not passively accepting this new reality. Across the Internet, a movement described as a web infrastructure revolt is taking shape as creators seek ways to regain control over how their content is used. A primary example is Cloudflare's "Content Signals Policy." This is a new standard for robots.txt files (the simple text files that give instructions to web crawlers) that allows website owners to state their preferences clearly.

The policy introduces three simple signals:

search: Can the content be used to build a search index? (yes/no)

ai-input: Can the content be used as an input to answer questions in real-time? (yes/no)

ai-train: Can the content be used to train or fine-tune AI models? (yes/no)

For instance, a website owner who wants to be included in traditional search but wants to block AI training can add a line to their robots.txt file like this: Content-Signal: search=yes, ai-train=no. This isn't a technical block, but it is a clear standardised policy statement. Transforming this from a concept into a concrete action, Cloudflare is already deploying this for 3.8 million domains that use its managed robots.txt feature, giving creators a formal way to declare their rules.

Summary

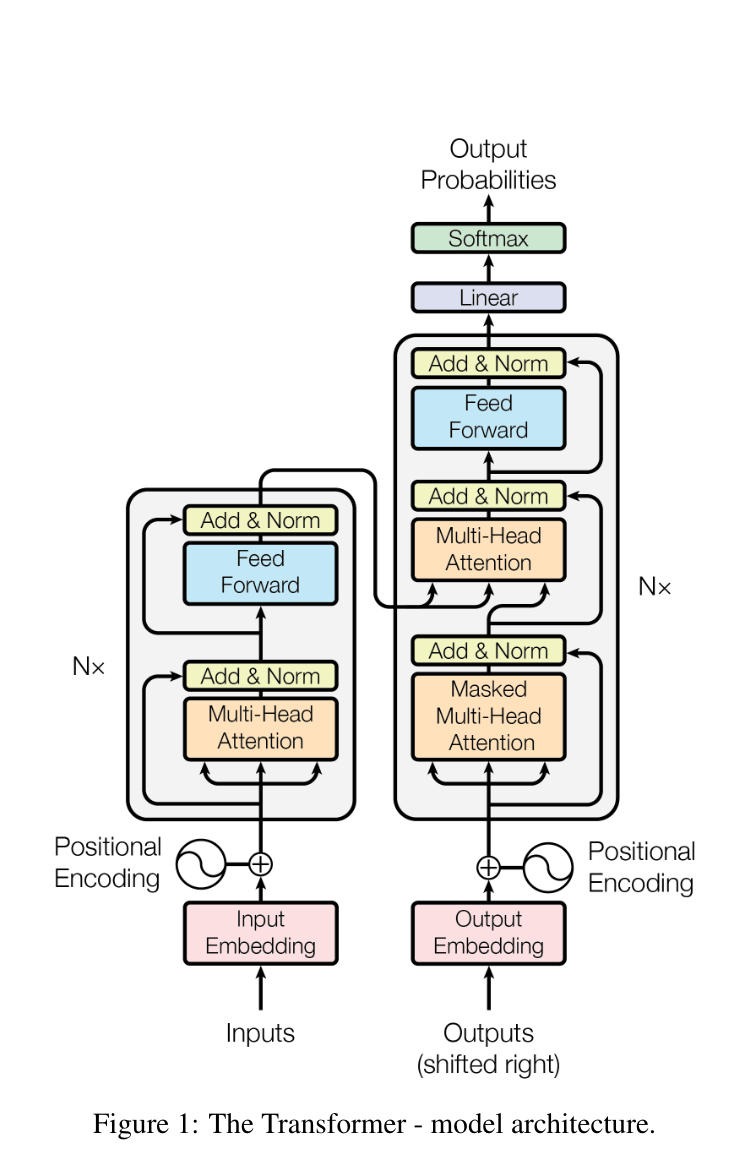

The Transformer is a novel network architecture proposed to replace complex recurrent and convolutional neural networks entirely with attention mechanisms. This model achieves state-of-the-art results on machine translation tasks, offering superior quality, enhanced parallelisation, and substantial reductions in training time.

Background

Dominant sequence transduction models typically rely on complex recurrent neural networks, such as Long Short-Term Memory (LSTM) or Gated Recurrent Networks (GRN), or convolutional networks. These models often connect the encoder and decoder components using an attention mechanism. However, recurrent models inherently factor computation sequentially along symbol positions, which severely limits parallelisation, a critical issue for longer sequence lengths. The Transformer aims to overcome this fundamental constraint by entirely eschewing recurrence and instead relying solely on an attention mechanism to draw global dependencies between input and output.

Use-case

The Transformer's primary application demonstrated in this work is sequence transduction, achieving new state-of-the-art results on the WMT 2014 English-to-German and English-to-French machine translation tasks. On the English-to-German task, the "big" model established a new state-of-the-art BLEU score of 28.4, surpassing all previous models, including ensembles. The Transformer also generalised successfully to English constituency parsing, a task characterised by strong structural constraints and longer outputs than inputs. For constituency parsing, the model performed surprisingly well, yielding better results than several previously reported models even without extensive task-specific tuning.

Future Work

The authors are excited about the future of attention-based models and intend to apply the Transformer architecture to various other tasks. A key area of research is extending the Transformer to problems involving input and output modalities other than text, such as images, audio, and video. To handle very long sequences efficiently, they plan to investigate local, restricted-attention mechanisms that limit attention to a neighbourhood of size r in the input sequence. Making the generation process less sequential is noted as another core research goal for the future.

You can get the research paper here.