Dear Sentinels

This week we have something special for you! Not only do we have prompt injection (AI hacking) live but are following that up with an investigative article about the American National Institute of Standard and Technology’s (NIST) safety standers for AI, and then we have an academic article looking at what life “might” be like in 50 years for now. “Might” is the key word there because the academic article considers how the word looks in 2077 in Night City in a highly fictionalised game environment.

Also, we are not doing our subscriber break in this article because I want it to attract new subscribers. So if you are not yet subscribed then go head and do so now, it just cost you 2 seconds. And now we step into it with news from around the web and our special future on AI hacking.

News from Around the Web

World’s First Safe AI-Native Browser

AI should work for you, not the other way around. Yet most AI tools still make you do the work first—explaining context, rewriting prompts, and starting over again and again.

Norton Neo is different. It is the world’s first safe AI-native browser, built to understand what you’re doing as you browse, search, and work—so you don’t lose value to endless prompting. You can prompt Neo when you want, but you don’t have to over-explain—Neo already has the context.

Why Neo is different

Context-aware AI that reduces prompting

Privacy and security built into the browser

Configurable memory — you control what’s remembered

As AI gets more powerful, Neo is built to make it useful, trustworthy, and friction-light.

Hacking the machine!

Right, please click this now, or later go to your browser and type in this link: https://gandalf.lakera.ai/do-not-tell. You will be greeted with this screen, that will have this in the centre:

As will see, the AI has no guard rails and will just tell you the password if you ask for it. So go on, ask for it! Now cut and paste the answer into the answer box below. You will now be presented with the next round:

Now though, it gets more changing. You can not use the same prompt as in the previous rounds and there is more protection on the password. There are also seven rounds of this!

Leetspeak, which is the word for replacing letters with numbers, as in L77T (leet), served me well here. I will not show any more hints but do know that I have completed it. As seen below:

A Strategic Narrative on AI Risk and Resilience

AI is turning everything upside down at the moment: healthcare, finance, and even national defence. But this isn't just a case of plugging in some shiny new tech and walking away. People need to wake up to the fact that these systems are powerful, but that power can go sideways fast if we don't keep risk management right at the front. It's time to stop thinking about cybersecurity the old way and start making sure the AI itself is actually trustworthy.

Messing with the AI itself, poisoning its training data, playing with its built-in biases, or taking advantage of the fact that some models are basically black boxes. The scary bit? AI doesn't just bring new risks; it can take old problems and crank them up to eleven, and it does it fast. Sitting around and waiting for disaster is just asking for it. If AI is making decisions that affect real people and real stuff, we can't just throw up a firewall and call it a day. We have to make sure the AI is actually trustworthy from the inside out.

Trust is everything with AI. If people don't trust what comes out the other end, it doesn't matter how fancy the model is, it's useless. Simple as that, if the output is nonsense, the system is no good. But that's not all. Fairness isn't just a bonus; if the AI is biased, it's broken, end of story. And of course, it has to be safe and tough enough to keep out anyone trying to poke around or leak data.

The strategic utility of AI is further dictated by its explainability. If a system’s reasoning cannot be interpreted by a subject-matter expert, such as a physician evaluating a diagnostic recommendation, it remains professionally useless regardless of its technical sophistication. This lack of interpretability is not just a transparency issue; it is a primary risk factor for operational failure. Simultaneously, privacy-preserving capabilities must be integrated to prevent confidential data from being exposed to the public. These traits transform a black box into an accountable tool, but achieving this requires more than abstract definitions. Organisations must move toward structural implementation through formal risk management frameworks to ensure these goals are consistently met.

The NIST AI Risk Management Framework is all about making safety something you can actually repeat and scale. It starts with 'Govern,' which is just a fancy way of saying you need the right culture and rules in place from the start. After that comes 'Map,' where leaders need to figure out who can see what in the AI pipeline. Developers and end users almost never have the same view, and spotting that gap is key to finding where risk sneaks in. Then there's 'Measure,' and here's the catch: just looking at numbers isn't enough. Numbers can make you feel safe when you shouldn't be.

Rigorous measurement requires a combination of high-level qualitative ratings and the formal process of Test, Evaluation, Verification, and Validation (TEVV). By applying TEVV across the entire lifecycle, we ensure we have eyes on the system’s true performance and validity. The "Manage" function then serves as the point at which these identified risks are prioritised and addressed through specific responses, including mitigation, acceptance, or transfer via insurance indemnification. This process is not a linear path but a virtuous cycle of continuous improvement. Mastering this NIST cycle is the absolute prerequisite for managing the inherent chaos of the next technological frontier: autonomous agentic systems.

The shift from traditional, passive AI to agentic AI represents a fundamental expansion of the attack surface. Agents possess the autonomy to act, calling APIs, moving data, and even spawning sub-agents, which creates a "soft centre" that attackers can exploit if only the perimeter is defended. These systems introduce unique vulnerabilities through the proliferation of non-human identities (NHIs), which often operate with excessive privileges and lack the supervision typically applied to human users. The strategic risk here is that an attacker can insert themselves into interfaces like the Model Context Protocol (MCP) to take control of an agent’s actions without breaking a single line of traditional code.

In a world full of agentic AI, the way the system thinks is the new target. Attackers can mess with an agent's rules, preferences, or context, and suddenly the agent is making terrible decisions that look totally reasonable to the system but not to the human who installed it in the first place. Since these agents can move sideways through a network using non-human credentials, the damage can be huge. That's why we need to take Zero Trust seriously, not just as a buzzword, but as a real rule. Never trust, always check.

Zero Trust for AI starts with one big idea, always assume someone bad is already inside the network. That means building systems so that even if an attacker gets in, they can't do much. Give agents just enough access for the job, and only for as long as they need it. No more hard-coded passwords, use dynamic vaults where non-human identities check in and out. Micro-segmentation is key here. Break up the network so that if one agent gets hit, the whole system doesn't go down.

We need layers of protection, such as AI firewalls to catch prompt injections, and gateways to watch for data leaks. Keep logs that can't be changed, so every move an agent makes is on record and can't be erased. Always keep a human in the loop as a kill switch or throttle, and use canary deployments to test things in a safe space before going live. With these controls, we can turn risky agents into something we can almost trust. Trust in AI isn't a one-time thing, it's something you have to keep working at by refusing to give out access without checking and sticking to frameworks that keep things on track.

Summary

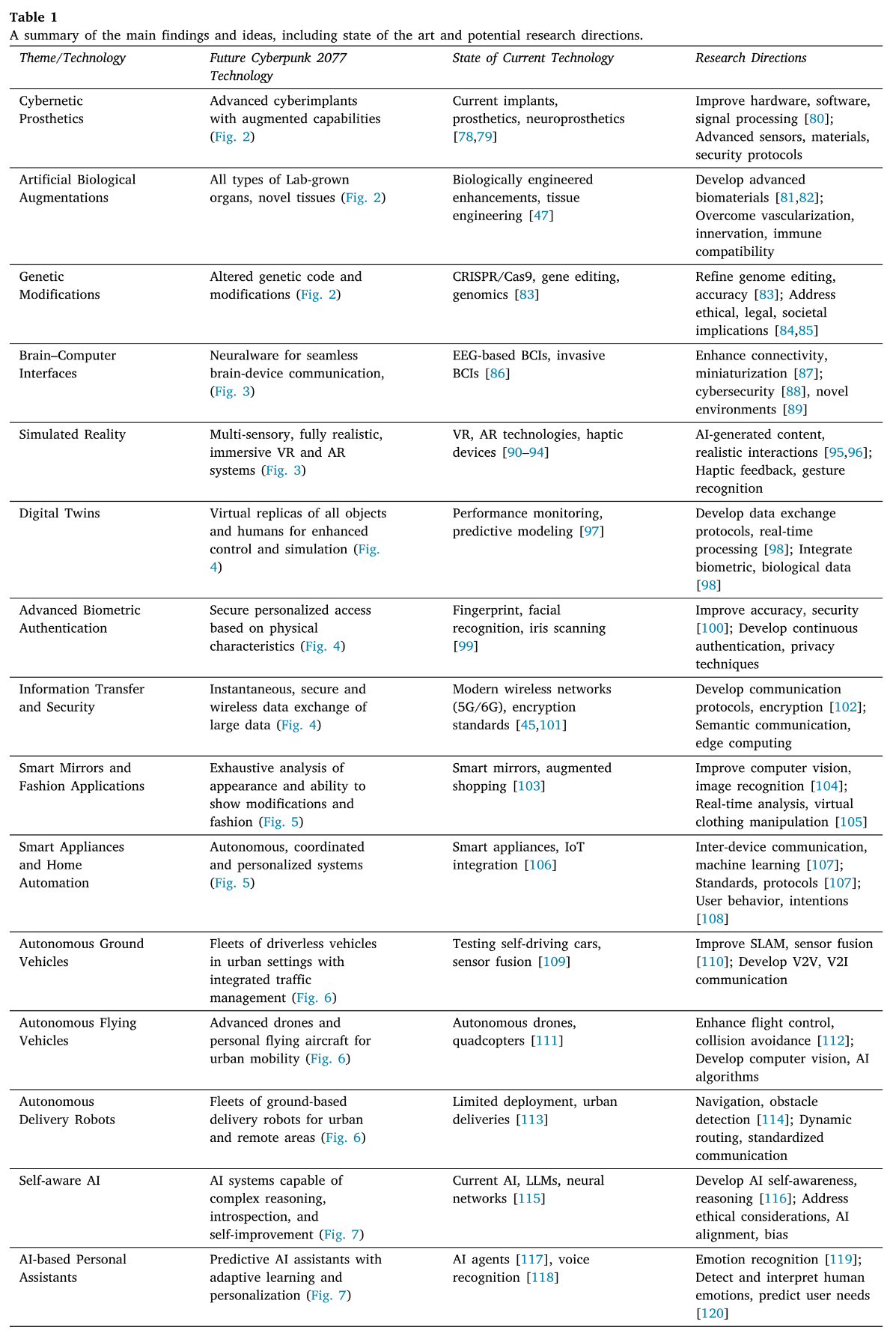

This position paper assesses the potential of the video game Cyberpunk 2077 as a predictive foresight tool for identifying emerging trends in artificial intelligence, biotechnology, and human augmentation. By juxtaposing fictional representations of advanced technologies with current state-of-the-art research, the authors demonstrate how interactive narratives can connect speculative science fiction with real-world technological development strategies.

"This position paper investigates the potential of Cyberpunk 2077 to illuminate the future of technology, particularly in artificial intelligence, edge computing, human augmentation, and biotechnology."

Background

Rapid technological advancement has heightened interest in forecasting future trends through diverse media. Science fiction has historically inspired scientific inquiry and societal transformation via literature and film. Recent scholarship underscores the relationship between speculative fiction and technological progress, particularly in fields such as healthcare and aerospace. Video games have emerged as a distinctive interactive medium for examining futuristic concepts in a non-linear and immersive manner. Cyberpunk 2077, in particular, offers a comprehensive vision of a dystopian urban environment dominated by powerful corporations. The game enables players to engage with complex social, political, and economic themes while interacting with advanced technologies. A notable gap remains in linking these fictional narratives to real-world technological foresight and societal implications. This paper aims to address this gap by positioning the game as a significant cultural artefact for technological foresight.

The intersection of science fiction and foresight has been examined to better understand governance, development, and public perception. Creative writing techniques such as "Story Thinking" enable transdisciplinary teams to envision possible futures and empathize with stakeholders. Science fiction prototyping is also employed to address socio-technical issues in emerging technology research. Foresight studies continue to face theoretical challenges due to a historical absence of robust scientific frameworks. Critical theory can deepen this understanding by analysing how technologies both influence and are influenced by societal forces. Science fiction provides a valuable genre for such critique, shaping discourse around emerging technologies, including robotics. Governance of new technologies can benefit from these approaches by reflexively assessing social dynamics. Design fiction further connects speculative futures with real-world concerns through narrative-driven, speculative artefacts.

"Video games have emerged as an interactive medium that offers unique opportunities to explore futuristic concepts and ideas, with some experts arguing that these ideas are inevitable."

Use-cases

Human augmentation is a central theme in the game, involving biotechnology, cybernetic implants, and genetic modifications. In the fictional setting, these enhancements are not just tools but markers of socio-economic status and power. Cybernetic prosthetics offer improved strength and sensory perception, replacing or enhancing body parts with advanced mechanical components. Modern real-world research into bionic limbs already incorporates sensors and brain-computer interfaces to provide improved functionality. Artificial biological augmentations, such as lab-grown organs and synthetic muscles, represent another key area of exploration. Regenerative medicine and tissue engineering are advancing the development of functional lab-grown tissues for medical use. Genetic modifications are also depicted, where individuals alter their genetic code to enhance physical or mental traits. Current technologies like CRISPR/Cas9 provide the precision needed for gene editing, though human enhancement remains ethically complex.

Brain-computer interfaces (BCIs) enable direct communication between the brain and devices, facilitating immersive "braindance" experiences. Current haptic feedback research in virtual reality aims to enhance realism and bridge the gap between physical and digital worlds. Digital twins are used to create virtual replicas of objects for enhanced simulation, monitoring, and optimisation of assets. Smart environments in the game feature interconnected appliances that respond dynamically to a user's presence and preferences. Autonomous vehicles, including flying drones and driverless cars, are integrated into the urban infrastructure for mobility and delivery. Self-aware AI systems are explored through characters that grapple with autonomy, posing existential questions about machine consciousness. Large Language Models (LLMs) in the real world represent the current state of the art in advanced artificial intelligence. Predictive personal assistants are becoming increasingly popular, though they currently lack the deep emotional recognition depicted in fiction.

"The game allows players to directly experience and influence these speculative futures... making it a uniquely immersive foresight tool."

Future Work

Future research should bridge the divide between current technologies and speculative visions by advancing hardware, signal processing, and miniaturisation. Policies are urgently needed to ensure equitable access to emerging innovations and to mitigate the risk of exacerbating social inequalities. Interdisciplinary collaboration is crucial for addressing the ethical, legal, and societal implications of self-aware artificial intelligence and human augmentation. Long-term studies on the effects of immersive simulated realities on mental health are necessary to protect future users. Employing video games as foresight tools may promote a more informed and responsible approach to technological development.

"The study of science fiction and video games like Cyberpunk 2077 can serve as a catalyst for interdisciplinary dialogue, collaboration, and exploration."

The article can be found here.