Dear Sentinels

Today, we are looking at Natural Language Processing (NLP) with Python, and after that, we are examining an extraordinary academic article which deals with sparsely-activated models. With the NLP, this is a new direction I want to go into by offering training open to all and accessible to all. I will do a poll on that in the year, as there are a number of issues that I need to sort through.

Then, with the article, I am trying a "new thing" which is simply longer two-paragraph sections in the middle. I will see how that goes in this week's and next week's edition of my newsletter. And thank you to my two "volunteers" who are reading this and the next one before they go live.

But first, it is time to go live with news from around the web:

News from around the web

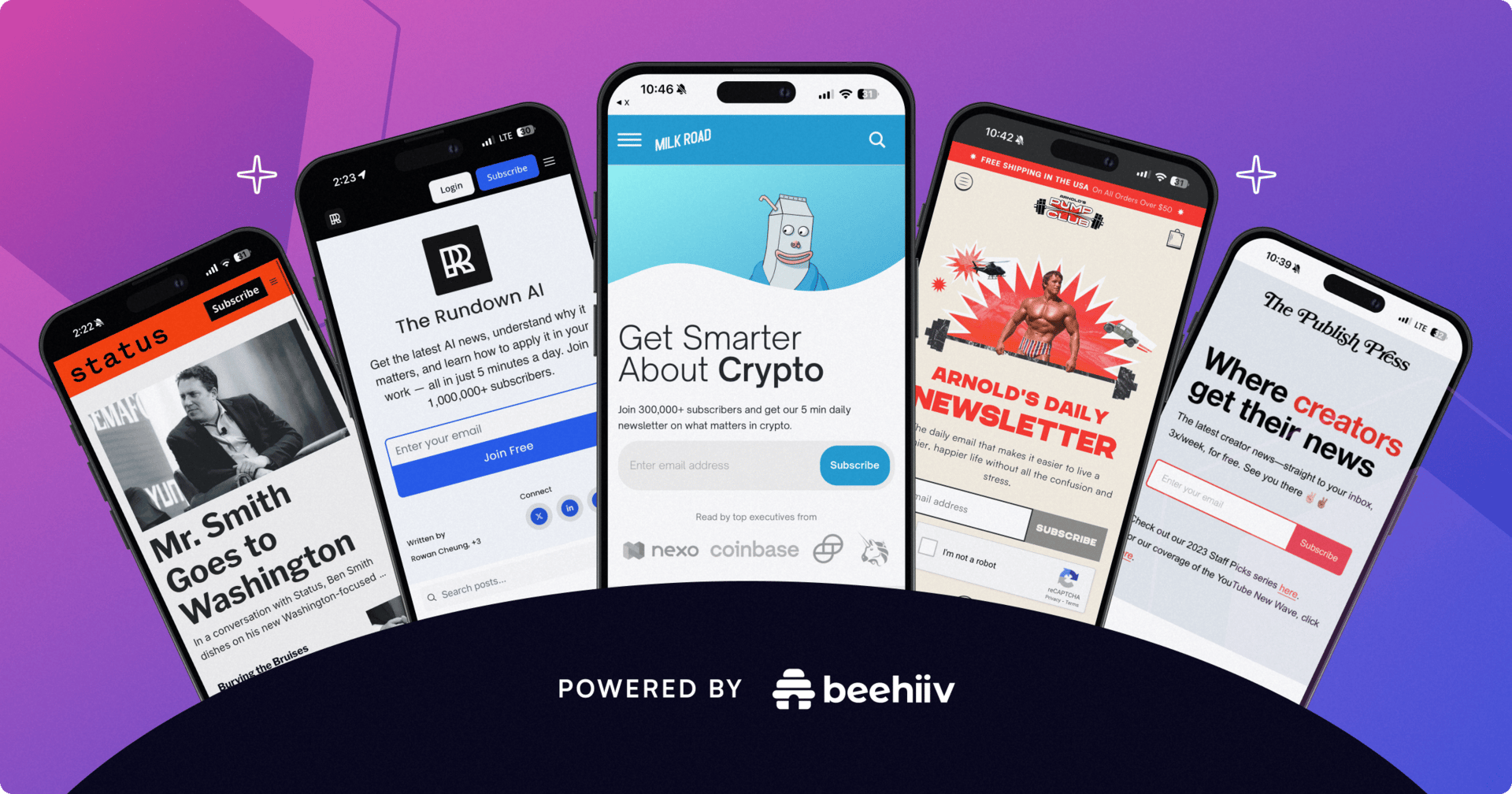

This newsletter you couldn’t wait to open? It runs on beehiiv — the absolute best platform for email newsletters.

Our editor makes your content look like Picasso in the inbox. Your website? Beautiful and ready to capture subscribers on day one.

And when it’s time to monetize, you don’t need to duct-tape a dozen tools together. Paid subscriptions, referrals, and a (super easy-to-use) global ad network — it’s all built in.

beehiiv isn’t just the best choice. It’s the only choice that makes sense.